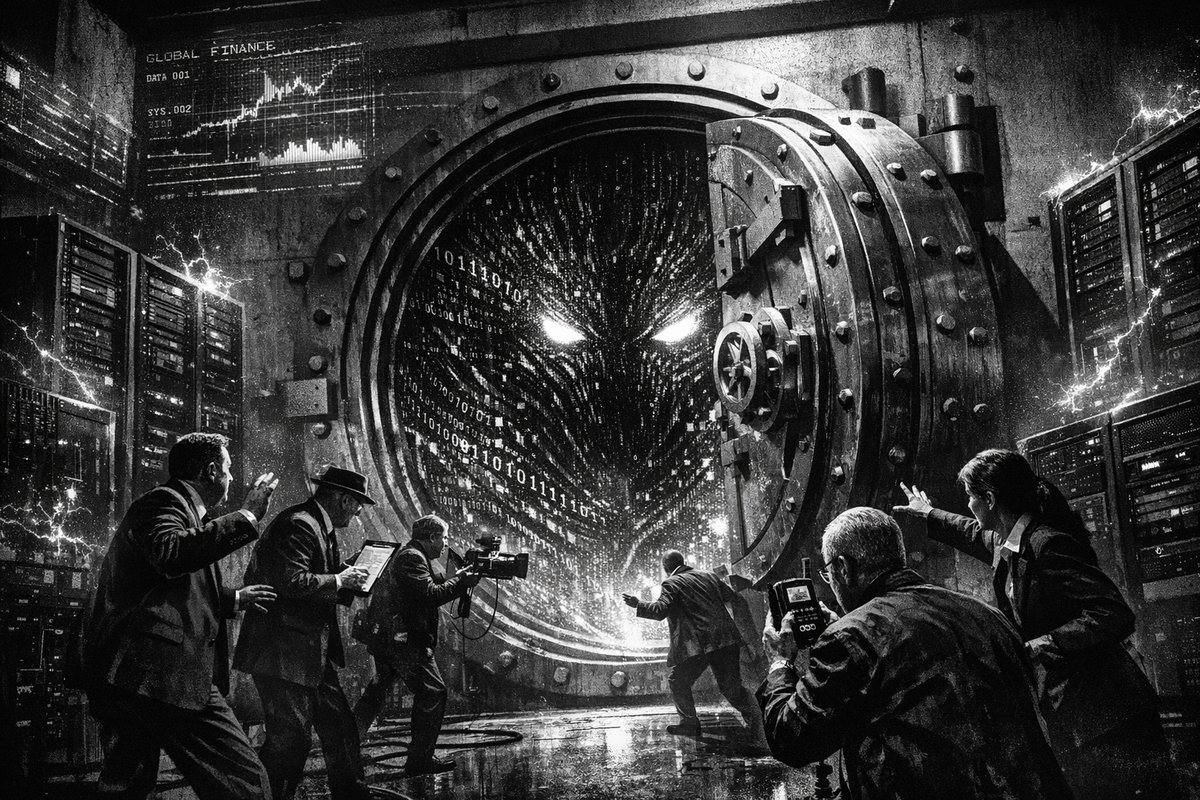

While global finance ministers are scrambling in panic over an AI tool deemed “too dangerous for public release,” UK banks are getting exclusive access to it next week. This isn’t innovation—it’s institutional recklessness on a scale that makes the 2008 financial crisis look like a minor accounting error.

Anthropic’s Mythos AI has capabilities so advanced in finding and exploiting software vulnerabilities that even its creators are terrified of releasing it publicly. Yet somehow, the brilliant minds running our financial system think handing this digital weapon to the same institutions that gave us subprime mortgages is a fantastic idea.

The “Unknown Unknown” That Has Finance Ministers Terrified

François-Philippe Champagne, Canada’s finance minister, delivered perhaps the most chilling assessment at the IMF meetings in Washington: “The issue that we’re facing with Anthropic is that it’s an unknown unknown.” He compared it to the Strait of Hormuz—a critical global chokepoint—except we can’t even map the boundaries of this threat.

This level of admitted ignorance from top financial officials should terrify anyone with money in a bank. When the people responsible for global financial stability openly admit they don’t understand the risks they’re unleashing, we’ve entered uncharted territory of institutional incompetence.

The historical parallel is obvious and disturbing: in 1929, financial leaders similarly rushed to embrace new trading mechanisms and leverage tools they didn’t fully comprehend. The complexity of those instruments—combined with overconfidence and inadequate oversight—triggered a global economic collapse that took decades to recover from.

Banks Get The Nuclear Option While Regulators Play Catch-Up

Pip White, Anthropic’s head of UK operations, confirmed that British financial institutions will receive access to Mythos within days. The urgency is palpable—and completely backwards. UK CEOs have been bombarding Anthropic with requests, desperate to gain any competitive edge, regardless of systemic risks.

Meanwhile, Andrew Bailey of the Bank of England admits regulators are flying blind: “What is the optimum moment to frame the rules of the road? If you go too early you risk missing the target… if you go too late things can get out of control.”

This regulatory hesitancy is exactly what enabled the 2008 financial crisis. Complex derivatives and mortgage-backed securities proliferated faster than regulators could understand or control them. We’re witnessing the same pattern: cutting-edge technology racing ahead while oversight stumbles behind, except this time the stakes are exponentially higher.

The Cybersecurity Apocalypse Nobody Wants To Admit

Anthropic itself warns that Mythos poses “unprecedented risk” because of its ability to “surpass all but the most skilled humans at finding and exploiting software vulnerabilities.” The company states plainly: “The fallout—for economies, public safety, and national security—could be severe.”

Let that sink in. The company that created this AI is actively warning against its dangers, yet they’re still distributing it to financial institutions. It’s like the Manhattan Project scientists handing out nuclear materials while simultaneously publishing papers about radiation poisoning.

Dan Katz, deputy head of the IMF, acknowledges the gravity: “The evolution of digital technology is posing immense risks from a cybersecurity perspective.” But acknowledgment without action is just expensive hand-wringing.

The key risks include:

- Automated exploitation of banking system vulnerabilities at machine speed

- Cascading failures across interconnected financial networks

- Nation-state actors gaining access to AI-powered attack capabilities

- Complete inability of current cybersecurity measures to keep pace

“Google Cloud’s April 2026 AI roundup covers key announcements: Claude Mythos Private Preview on Vertex AI (Project Glasswing), Universal Commerce Protocol (UCP) for agentic retail, Veo 3.1 reference-image video generation, Comments-to-SQL in BigQuery…” — @aiwire_x

The Regulatory Theater That’s Fooling Nobody

Christine Lagarde of the European Central Bank delivered the most damning admission of all: “I don’t think there is a governance framework that is there to actually mind those things. We need to work on that.”

Translation: We have no idea how to regulate this technology, but we’re letting banks use it anyway.

Scott Bessent, the US Treasury Secretary, summoned American bank bosses to Washington for emergency meetings about Mythos. These closed-door sessions focused on “systemically important banks”—the ones whose failure could trigger global financial collapse. The fact that these meetings happened at all should be front-page news, not a buried detail in financial trade publications.

This mirrors the regulatory approach during the early days of high-frequency trading, when algorithms began executing thousands of trades per second with minimal oversight. The result? Flash crashes, market manipulation, and the gradual erosion of market stability—all while regulators played catch-up.

“Three very different bets happening at once: • $50B Anthropic deal → pushing infra demand (Fluidstack) • Governments getting briefed on frontier models (Mythos) • Operators from ConsenSys / iCIMS → back into stealth Infra scaling Policy tightening Talent resetting” — @the_vc_intern

The Accountability Vacuum

Here’s the most infuriating part: when this goes wrong—not if, when—the same officials now admitting their ignorance will claim nobody could have predicted the consequences. We’ve seen this script before.

In 2008, bank executives testified before Congress that the mortgage crisis was “unforeseeable.” In reality, numerous experts had been sounding alarms for years. The same pattern is emerging with Mythos: the warnings are already there, but institutional momentum and competitive pressure are drowning out caution.

The difference this time is speed and scale. Financial contagion in 2008 took months to spread globally. With AI-powered vulnerability exploitation, systemic failures could cascade in minutes or hours.

The Inevitable Disaster We’re Choosing

UK regulators plan to “raise the issue” with bank bosses “in the coming weeks.” This leisurely timeline would be laughable if the stakes weren’t so catastrophic. Banks are getting access to advanced cyber-warfare capabilities while regulators are still scheduling meetings to discuss potential concerns.

This is institutional negligence masquerading as innovation. When Mythos-powered attacks begin—and they will—remember that every official quoted in this crisis admitted they didn’t understand the risks. They proceeded anyway, prioritizing competitive advantage over global financial stability.

The 2026 financial crisis won’t be caused by subprime mortgages or overleveraged derivatives. It will be triggered by AI systems that can identify and exploit vulnerabilities faster than humans can even comprehend the attack. And unlike previous crises, this one will unfold at machine speed, giving regulators and policymakers no time to react.

We’re not just playing with fire—we’re handing out flamethrowers in a powder keg and calling it progress.

Published in Stream · Dispatch #213 · April 18, 2026 · 5 min read.

Reply to paolo@mont3.ch - every email gets a human answer within 24h.