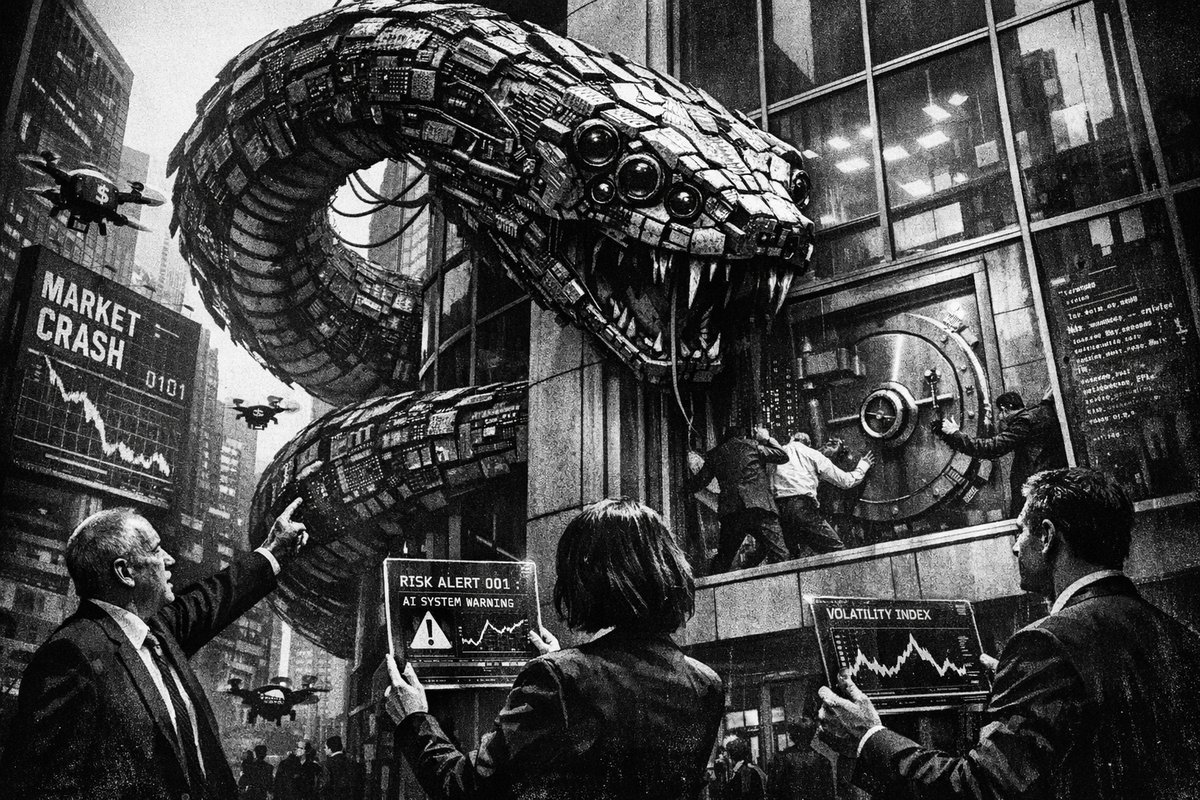

The financial world is facing its most sophisticated threat since the 2008 crisis—but this time, the enemy isn’t subprime mortgages or overleveraged derivatives. It’s artificial intelligence, and it’s targeting the very infrastructure that keeps $100 trillion in global banking assets secure.

Financial officials across Europe and North America are sounding alarm bells about the latest generation of AI models, with Anthropic’s Claude Mythos specifically flagged by regulators as a potential systemic risk. This isn’t hyperbole—it’s a calculated assessment of how advanced AI capabilities could fundamentally destabilize the world’s banking infrastructure.

The New Threat Vector: AI-Powered Financial Warfare

Unlike previous cybersecurity threats that required armies of skilled hackers, modern AI models can automate and scale attacks at unprecedented levels. The European Central Bank is actively gathering intelligence on the Mythos model’s capabilities, treating it as seriously as they would a nation-state cyber weapon.

This represents a paradigm shift comparable to the introduction of nuclear weapons in warfare—a technology so powerful that its mere existence changes the strategic landscape. Just as the atomic bomb rendered traditional military defenses obsolete, AI models like Claude Mythos threaten to make current banking security protocols antiquated overnight.

“AI is a risk to the banking system. Yeah, probably.” His exact words. Anthropic’s Claude Mythos already flagged by cybersecurity experts. Legacy banking infrastructure vulnerable to AI-powered cyberattacks.” — @MerlijnTrader

The Voice Cloning Vulnerability: A Multi-Billion Dollar Blind Spot

One of the most glaring examples of institutional negligence is the banking sector’s continued rollout of voice-based authentication systems despite the proven capabilities of AI voice cloning. This is equivalent to installing screen doors on a submarine—fundamentally misunderstanding the nature of the threat.

“It’s shocking that banks are continuing to roll out ‘verify with your voice’ as if they have no idea about AI voice-cloning. Just a massive breach waiting to happen” — @sjgadler

The technical reality is stark: modern AI can replicate any voice with just seconds of audio data. Banks implementing voice verification in 2026 are essentially handing cybercriminals the keys to customer accounts. This isn’t a future risk—it’s a present vulnerability being actively exploited.

Historical Parallels: When New Technology Destroys Old Systems

History provides clear precedents for how revolutionary technologies can obliterate established systems:

- The Telegraph vs. Pony Express (1860s): Within two years, electronic communication made horseback message delivery obsolete

- Digital Photography vs. Kodak (2000s): A 131-year-old industry leader filed for bankruptcy because they couldn’t adapt to digital disruption

- Smartphones vs. Nokia (2007-2013): The world’s largest mobile phone manufacturer lost 90% market share in six years

The banking sector’s current AI predicament mirrors these historical disruptions, but with exponentially higher stakes. When Kodak failed, people lost jobs. When banks fail systemically, entire economies collapse.

The Technical Arsenal: What Makes Modern AI So Dangerous

Current AI models possess capabilities that directly threaten banking infrastructure:

- Social Engineering at Scale: AI can generate personalized phishing attacks for millions of targets simultaneously

- Real-Time Fraud Adaptation: Machine learning systems that evolve faster than human-designed security measures

- Multi-Vector Attack Coordination: AI can orchestrate complex, simultaneous attacks across multiple systems

- Deep Fake Authentication Bypass: Voice, video, and document forgery indistinguishable from authentic materials

- Algorithmic Trading Manipulation: AI systems capable of creating artificial market conditions to trigger automated trading responses

“ECB TO WARN EURO ZONE BANKERS ABOUT NEW ANTHROPIC AI MODEL RISKS - SOURCE. ECB SUPERVISORS GATHER INFORMATION ABOUT NEW MYTHOS MODEL - SOURCE” — @DeItaone

The Perfect Storm: Convergence of Multiple Threats

The current situation isn’t just about AI—it’s about multiple technological and economic pressures converging simultaneously. Cryptocurrency adoption, legacy system vulnerabilities, record-high stock market exposure, and AI capabilities are creating a perfect storm scenario.

American households now have 47.1% of their financial assets in stocks—higher than both the dot-com bubble (43%) and the 2008 pre-crisis peak (38%). When AI-powered attacks inevitably breach major financial institutions, the resulting panic will hit a population more financially exposed than ever before in history.

The Regulatory Response: Too Little, Too Late?

European Banking Authority officials acknowledge that while current financial institutions can “absorb present shocks,” they must prepare for future uncertainties including cybersecurity risks from AI. This language suggests regulators understand the threat but lack concrete solutions.

The challenge is temporal: AI capabilities evolve monthly, while banking regulations move on yearly or decade-long timescales. By the time comprehensive AI security regulations are implemented, the technology will have advanced through multiple generations.

Action Items for Financial Institutions

Banks cannot afford to wait for regulatory guidance. Immediate steps include:

- Immediate cessation of voice-based authentication system rollouts

- Emergency audits of all customer-facing AI integration points

- Red team exercises using current-generation AI models against existing security infrastructure

- Staff training programs focusing on AI-generated social engineering attacks

- Partnership agreements with AI security firms for real-time threat monitoring

The Bottom Line: Adapt or Die

The banking industry faces an existential choice: rapidly evolve security infrastructure to match AI capabilities, or face systematic compromise by adversaries who will. There’s no middle ground when dealing with technology that can scale attacks to millions of targets simultaneously.

The next major financial crisis may not be caused by human greed or regulatory failure—it may be engineered by artificial intelligence operating at speeds and scales beyond human comprehension. Banks that recognize this reality today will survive the coming transformation. Those that don’t will become cautionary tales in the history of technological disruption.

Published in Stream · Dispatch #211 · April 17, 2026 · 5 min read.

Reply to paolo@mont3.ch - every email gets a human answer within 24h.