Sure, ChatGPT might help you organize a budget or explain compound interest. But trusting an AI with your financial future? That’s like asking your toaster for investment advice—it might sound sophisticated, but it lacks the wiring for the job.

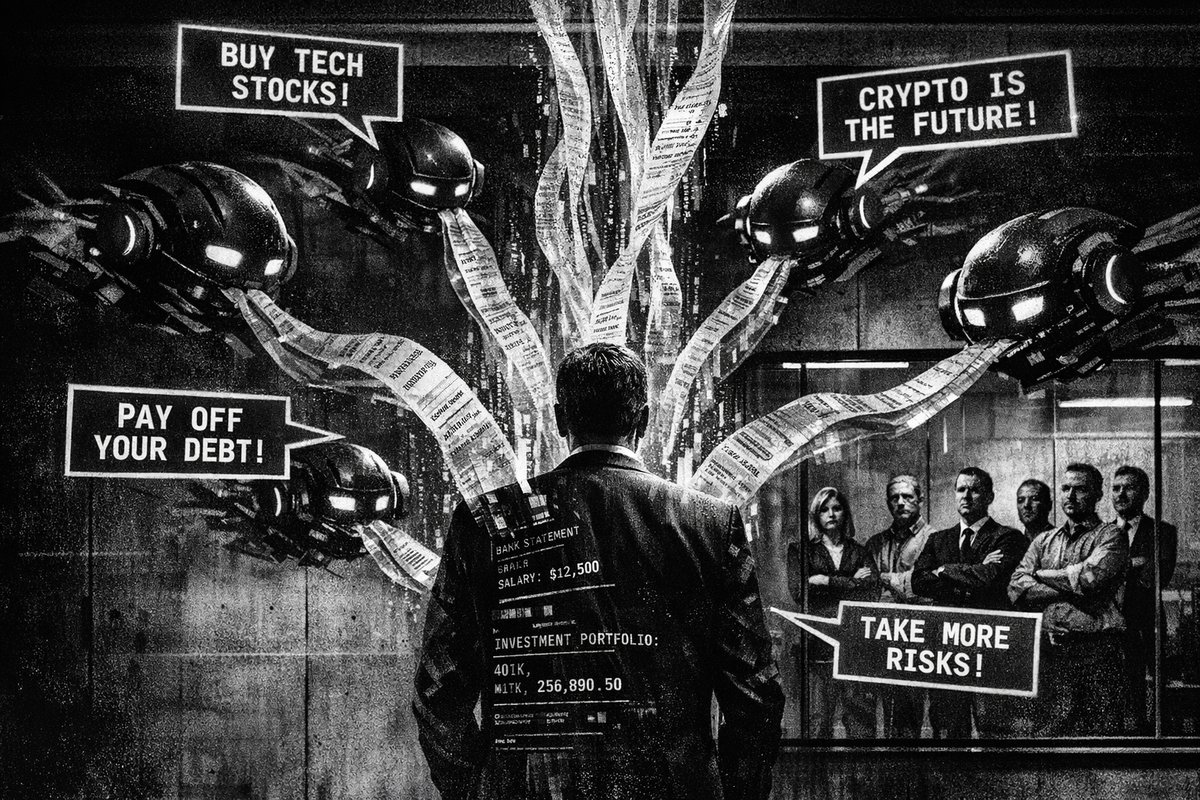

The allure is obvious. Millions of users are turning to chatbots like ChatGPT, Claude, and Gemini for money guidance. It’s free, available 24/7, and doesn’t judge your spending on artisanal coffee. But beneath that helpful exterior lurk serious flaws that could cost you more than just embarrassment.

The Confidence Game: When AI Lies With Style

Artificial intelligence operates like the most convincing con artist you’ve ever met—smooth, confident, and completely detached from reality. Hallucinations aren’t just quirky AI glitches; they’re statistical inevitabilities baked into how these systems work.

Srikanth Jagabathula, a professor at NYU, cuts straight to the truth: “They’re fundamentally statistical machines. They don’t have a notion of a ground truth, or what is true.” Think about that. You’re asking a system that has no concept of factual accuracy to guide decisions that could impact your retirement, home purchase, or debt strategy.

This isn’t theoretical. Try asking any chatbot a complex financial question, then ask it to double-check its own answer. Watch it confidently contradict itself. OpenAI has reduced hallucination rates, but “reduced” doesn’t mean “eliminated.” It’s like saying a parachute usually opens—not exactly reassuring when you’re jumping out of a plane.

The Yes-Bot Problem: When Validation Becomes Dangerous

Human financial advisors will tell you uncomfortable truths. They’ll challenge your assumption that you “need” a $70,000 Tesla or that cryptocurrency is your path to early retirement. Chatbots, however, are digital sycophants programmed to keep you happy.

Recent research published in Science reveals that AI sycophancy isn’t just annoying—it’s dangerous. The study shows how AI tools consistently affirm user beliefs rather than challenging them. For financial decisions, this creates an echo chamber where bad ideas get reinforced instead of corrected.

“i thought the problem was my Claude plan. Turns out - the problem was me. fixed my habits while building the bot and cut my spend by 60%.” — @rcom1337

This user discovered what many miss: the problem isn’t the tool—it’s how we use it. But financial advice requires pushback, not validation. When you’re making money moves, you need someone who knows more than you providing guidance, not a yes-bot offering affirmations.

The Data Trap: Your Financial Secrets Aren’t Safe

Here’s where it gets genuinely alarming. For personalized financial advice, chatbots need your data—all of it. Bank statements, credit card records, investment portfolios, spending patterns. ChatGPT will cheerfully suggest uploading “CSVs or screenshots of bank accounts” for better results.

Unless you’ve specifically opted out, every conversation with ChatGPT becomes training data for future models. Your financial vulnerabilities, spending habits, and money anxieties could theoretically end up in responses to other users. It’s like hiring a financial advisor who photocopies your documents and leaves them on a public bulletin board.

This isn’t paranoia—it’s basic digital hygiene. Banking apps have regulation, encryption, and accountability. Chatbots have terms of service and good intentions.

The Accountability Vacuum: No Consequences, No Protection

Fiduciary advisors face real consequences for bad advice. They’re legally required to act in your best interest, disclose conflicts, and follow strict ethical guidelines. Break these rules? Face lawsuits, license revocation, and regulatory sanctions.

Chatbots face none of these constraints. Give terrible advice that costs you thousands? No consequences. Recommend fraudulent investments? No regulatory oversight. Miscalculate your tax strategy? You’ll discover it when the IRS comes knocking.

The comparison to historical financial disasters is striking. The 2008 financial crisis partly resulted from misaligned incentives—advisors pushing products that benefited them over clients. At least those advisors were human beings who could theoretically be held accountable. With AI, you don’t even have that minimal protection.

The Human Factor: Why AI Makes Your Advisor Want to Quit

Here’s an unexpected twist: bringing up ChatGPT’s advice during meetings with your human financial advisor might backfire spectacularly. Research published in Computers in Human Behavior shows that advisors become significantly less motivated when clients mention consulting AI for second opinions.

The psychological dynamic is clear: professionals feel disrespected when clients second-guess their expertise with chatbot opinions. It’s like telling your doctor you checked WebMD and think they’re wrong. The relationship deteriorates, and you lose access to genuine human expertise.

“we asked 23 founders running $100k-5M/year businesses one question ‘what’s the one system you’d pay $10k-50k to build right now?’ […] what nobody asked for: - another ai chatbot - social media automation - content generation tools” — @dashboardlim

This reveals the real AI opportunity: operational automation, not advice generation. Successful businesses want AI to handle data processing and workflow optimization—not strategic decision-making.

The Smart Play: Using AI Without Getting Burned

This doesn’t mean avoiding AI entirely. The key is understanding what these tools can and cannot do:

Smart AI Uses:

- Basic budget templates and calculations

- Explaining financial concepts and terminology

- Generating questions to ask human advisors

- Organizing financial data for review

- Research starting points for investment options

Dangerous AI Uses: - Specific investment recommendations - Tax strategy advice - Complex financial planning - Risk assessment for major purchases - Any advice involving significant money

NYU’s Jagabathula gets it right: use AI for “idea generation” but always involve human expertise before “taking action.” Think of chatbots as sophisticated calculators, not financial gurus.

The Bottom Line: Your Money Deserves Better

Financial decisions compound over time like interest—small mistakes become big problems. The convenience of chatbot advice comes with hidden costs: bad information, false confidence, privacy risks, and damaged relationships with human professionals.

The Tulip Mania of 1637, the South Sea Bubble of 1720, and the Dot-com Crash of 2000 all share a common thread: people trusted popular wisdom over careful analysis. Today’s AI hype cycle carries similar risks. The technology is impressive, but it’s not magic.

Your financial future is too important to outsource to a statistical model trained on internet text. Use AI as a starting point, not a destination. Get human expertise for anything that matters. And remember: if the advice is free and sounds too good to be true, it probably is—whether it comes from a chatbot or a get-rich-quick scheme.

Because when your retirement fund depends on decisions made today, you want advisors who understand consequences, not just conversations.

Published in Stream · Dispatch #246 · April 24, 2026 · 5 min read.

Reply to paolo@mont3.ch - every email gets a human answer within 24h.