The AI industry’s memory shortage has reached a breaking point. Anthropic, the company behind Claude, is now in early talks to purchase inference chips from Fractile, a UK startup that won’t even have commercial products until 2027. This unprecedented move signals just how desperate AI companies have become to escape the stranglehold of traditional memory architectures and soaring costs.

The SRAM Revolution: Trading Capacity for Speed

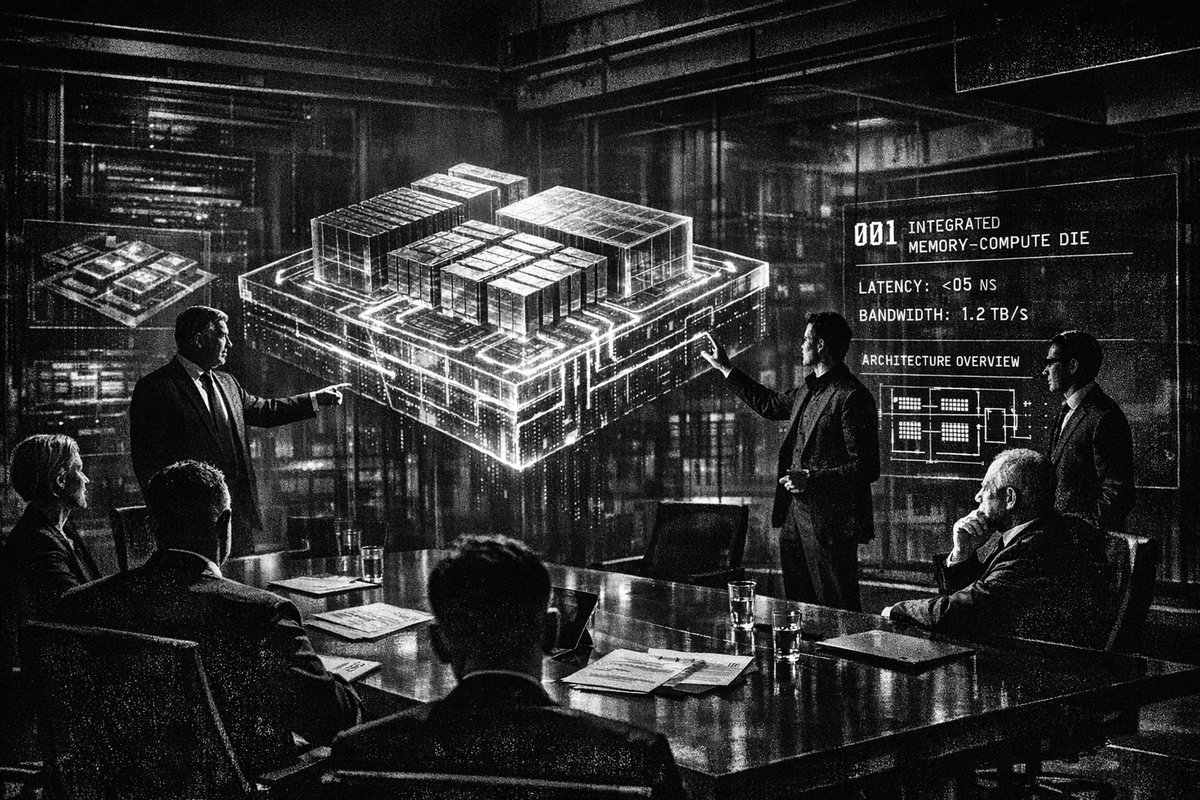

Fractile’s approach represents a fundamental shift in chip design philosophy. Instead of relying on separate DRAM chips that create bottlenecks when shuttling data back and forth, the company co-locates memory and compute on the same die using SRAM. Think of it as the difference between a library where books are stored in a distant warehouse versus one where every desk has its own bookshelf.

This architectural change isn’t just incremental — it’s revolutionary. Walter Goodwin, Fractile’s founder and Oxford PhD, claims their design could run large language models 100 times faster and 10 times cheaper than Nvidia’s current GPUs. While these numbers come from simulations rather than real-world testing, the potential impact explains why investors are reportedly discussing a $1 billion valuation for a company that hasn’t manufactured test chips yet.

“Anthropic is talking to a small UK chip startup called Fractile about buying their inference chips once they’re ready next year, basically another move to stop being so dependent on Nvidia. What’s really interesting is that Fractile is using the Anthropic deal as a selling point to raise $100 m from investors, so Anthropic’s buying power is literally shaping who gets funded in the chip world.” — @kimmonismus

Historical Parallels: When Scarcity Drives Innovation

This scenario mirrors critical moments in computing history when resource constraints forced architectural breakthroughs. During the 1970s oil crisis, automakers abandoned gas-guzzling V8 engines for fuel-efficient designs. Similarly, the 2008 financial crisis pushed cloud computing adoption as companies sought cheaper alternatives to expensive on-premise infrastructure.

The current memory crunch is driving similar desperation-fueled innovation. Samsung, SK Hynix, and Micron are prioritizing HBM (High Bandwidth Memory) for AI infrastructure, leaving conventional memory scarce for everything else. The ripple effects are staggering:

- Microsoft’s Surface Pro pricing jumped from $999 to $1,499

- Lenovo reported memory costs surged 40-50% last quarter

- Intel’s CEO warned there may be no relief until 2028

- Smartphone shipments declined 4-6% in Q1 2026

Anthropic’s Multi-Vendor Strategy: Learning from History’s Mistakes

Anthropic’s approach of diversifying chip suppliers reflects hard-learned lessons from supply chain disasters. The company already uses chips from Nvidia, Google, and Amazon, and now potentially Fractile as a fourth source. This strategy echoes Toyota’s response to the 1997 Aisin fire that shut down nearly all Toyota production in Japan because they relied on a single supplier for a critical component.

The stakes couldn’t be higher. Anthropic’s annualized revenue run rate hit $30 billion in March, up from $9 billion at the end of 2025. But inference costs are eating into margins, forcing the company to seek alternatives to expensive traditional architectures.

“They’re buying something that hasn’t even launched yet?” — @jukan05

This skeptical reaction highlights the unprecedented nature of this deal. Unlike typical procurement cycles where companies evaluate proven hardware, AI giants are now pre-ordering chips based on simulations and promises.

The Broader Memory Architecture War

Fractile isn’t alone in pursuing memory-centric designs. Groq and Cerebras are also developing inference-focused architectures, with Nvidia acquiring Groq for $20 billion in December 2025. This acquisition wave mirrors the 1990s graphics card consolidation when 3dfx, ATI, and dozens of smaller players were absorbed as the market matured.

The technical challenge is immense. SRAM is faster than DRAM but significantly more expensive and takes up more space on the chip. It’s like choosing between a small, expensive downtown apartment (SRAM) versus a larger suburban house with a long commute (DRAM). The question is whether the speed benefits justify the cost and space trade-offs at scale.

What This Means for the Industry

This move signals three critical trends:

- AI inference costs remain a major bottleneck despite massive revenue growth

- Traditional memory architectures are fundamentally inadequate for next-generation AI workloads

- Supply chain diversification has become a strategic imperative, not just a risk management exercise

The 2027 timeline for Fractile’s commercial readiness places it in the same window as Anthropic’s expanded Google-Broadcom TPU partnership, suggesting the company is betting on multiple architectural approaches simultaneously.

The Bottom Line: Betting on the Future

Anthropic’s willingness to negotiate deals for non-existent hardware reveals just how constrained the current AI infrastructure landscape has become. This isn’t just about buying chips — it’s about securing the architectural foundation for the next generation of AI systems.

The success or failure of Fractile’s SRAM-based approach will likely determine whether we see a fundamental shift away from traditional GPU-DRAM architectures or if the current memory bottlenecks persist for years to come. For an industry built on exponential growth, standing still isn’t an option.