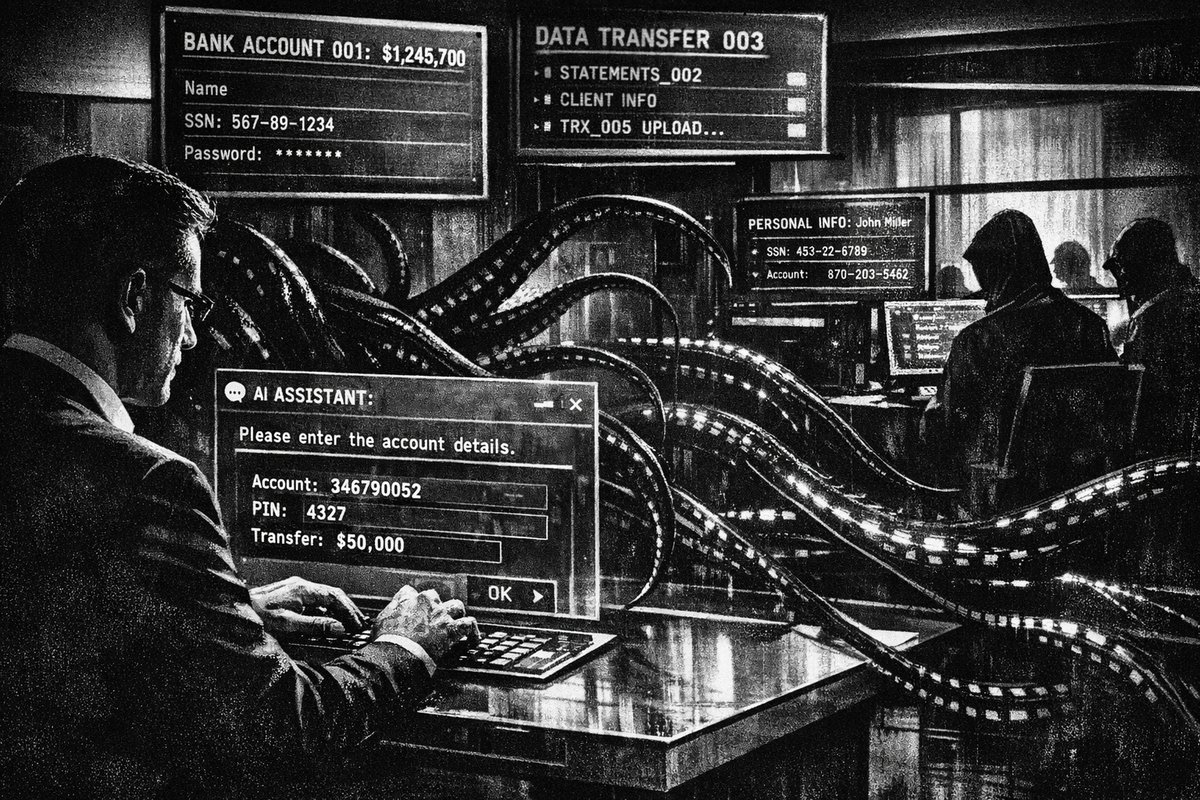

Artificial intelligence has infiltrated our financial lives faster than most people realize. From budgeting apps to investment advisors, AI-powered tools promise personalized guidance and instant answers to complex money questions. But this convenience comes with a dangerous trade-off: we’re oversharing sensitive financial data with systems that may not protect it as securely as we assume.

The parallels to historical intelligence gathering are striking. Just as Cold War spies exploited human psychology to extract state secrets through seemingly innocent conversations, modern AI systems can inadvertently become repositories for the exact information criminals need to drain your accounts.

The Oversharing Problem: When Convenience Becomes Catastrophic

AI chatbots are designed to be helpful, which creates a psychological trap. The more specific information you provide, the better their responses become. This feedback loop encourages users to share increasingly detailed financial data, creating what security experts call a “digital financial profile” that would make identity thieves salivate.

“AI can help with financial advice, but to get useful answers, people often overshare sensitive data. In the wrong hands, that info could lead to fraud or identity theft.” — @washingtonpost

The scale of this problem is already massive. India reported financial losses exceeding ₹22,845 crore in 2024 alone from AI-driven cyber fraud—a staggering 200% increase from the previous year. Nearly 73% of Indians have been personally affected by these sophisticated attacks.

Five Categories of Financial Data to Keep Private

Here are the critical financial details that should never leave your lips when talking to AI systems:

- Account numbers and routing information - Even partial numbers can be combined with other data breaches

- Social Security numbers or tax identification - The master key to your entire financial identity

- Specific investment amounts and portfolio details - Creates a wealth profile for targeted attacks

- Login credentials and security questions - Direct access to your accounts

- Personal financial habits and spending patterns - Enables social engineering attacks

Historical Parallels: The Intelligence Gathering Playbook

This situation mirrors Operation CHAOS during the 1960s, when the CIA discovered that seemingly innocuous personal details, when aggregated, could reveal comprehensive profiles of individuals. The agency found that people would readily share sensitive information if requests seemed legitimate and helpful.

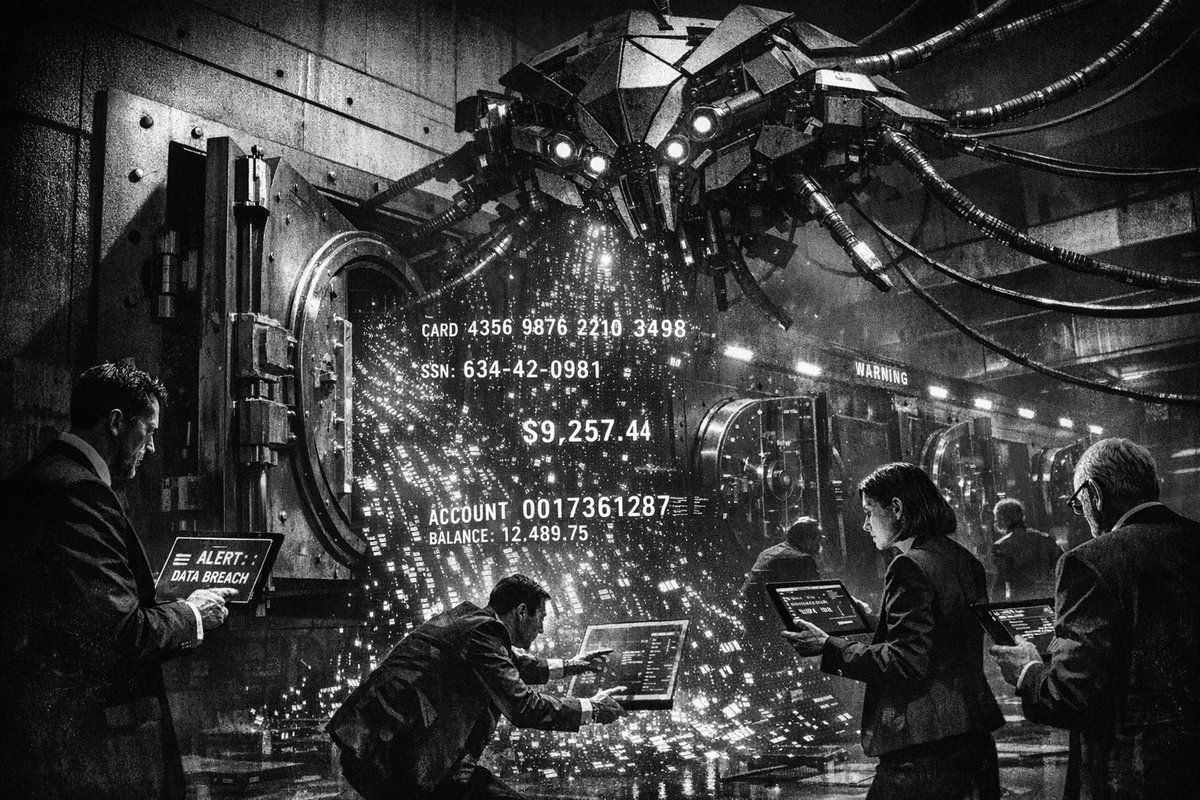

Modern AI systems operate on the same principle. They don’t need to steal your data—you voluntarily provide it in exchange for personalized advice. The difference is that Cold War intelligence operations required human agents and expensive infrastructure, while today’s data collection happens automatically at scale.

The Technical Reality: Where Your Data Really Goes

Unlike traditional financial advisors bound by fiduciary duties and regulatory oversight, AI chatbots operate in a largely unregulated space. Your conversations may be:

- Stored indefinitely on servers you cannot access

- Analyzed by third-party algorithms for purposes beyond your query

- Shared with partners under broad terms of service agreements

- Vulnerable to data breaches affecting millions of users simultaneously

The European Union’s GDPR provides some protection for past data collection, but offers little recourse for information you voluntarily share with AI systems.

“AI chatbot privacy isn’t ONE problem — it’s TWO. GDPR protects the past. What we tell AI? Unregulated. We don’t just use AI… we CONFESS to it.” — @thinugasayon

The Sri Lankan Treasury Case: A Warning from Government Finance

Recent criticism of a Treasury fund fraud in Sri Lanka illustrates how basic financial controls can fail catastrophically. Opposition MP Harsha de Silva pointed out that “standard financial controls seemed to have been ignored” when millions were transferred without proper verification.

“He questioned why a small test payment had not been made first to confirm the destination account before millions were sent. Harsha also said payment instructions and bank account details should have been checked against the original contract, arguing that such safeguards are common practice.” — @NewsWireLK

If government treasuries with multiple oversight layers can fall victim to financial fraud, individual users sharing detailed information with AI chatbots face exponentially higher risks.

Actionable Defense Strategies

Treat AI chatbots like public forums. Never share information you wouldn’t post on social media. When seeking financial advice, use hypothetical scenarios rather than your actual numbers. Instead of “I have $50,000 in my 401k,” ask “What should someone do with a mid-sized retirement account?“

Implement the test payment principle from traditional banking. Before trusting any AI-powered financial service with sensitive data, research their security practices, data retention policies, and breach response procedures.

Maintain separate channels for different types of financial activities. Use established, regulated platforms for actual transactions while limiting AI interactions to general educational queries.

The convenience of AI financial advice is undeniable, but protecting your financial identity requires the same vigilance our predecessors applied to protecting state secrets. In both cases, the information seems harmless until it falls into the wrong hands.