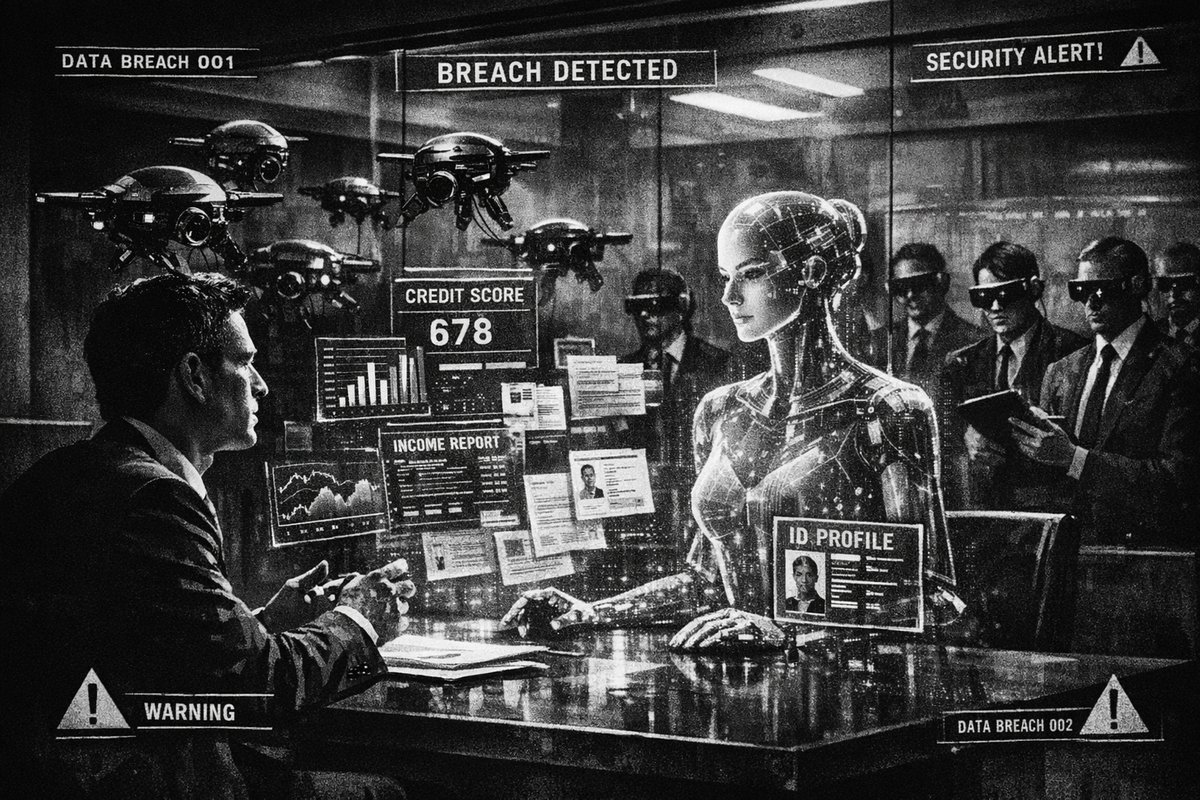

Americans are rushing to AI chatbots for financial advice in unprecedented numbers, but they’re walking into a digital privacy minefield. The convenience of asking an AI about your retirement savings, debt consolidation, or investment strategy comes with a devastating hidden cost: your most sensitive financial data is being exposed, stored, and potentially weaponized against you.

This isn’t just another tech privacy concern—it’s a fundamental threat to financial security that echoes some of history’s most dangerous information leaks, but with far more personal and lasting consequences.

The New Gold Rush of Personal Financial Data

When people ask AI systems about their financial situations, they’re not just sharing numbers. They’re revealing their complete financial DNA: income levels, debt ratios, spending patterns, investment goals, and family financial obligations. This data is exponentially more valuable and dangerous than the credit card breaches of the past decade.

Consider the Equifax breach of 2017, which exposed 147 million Americans’ credit information. That was devastating, but it was largely static data—credit scores and account numbers. AI financial conversations contain dynamic, contextual information that paints a complete picture of your financial vulnerabilities, future plans, and decision-making patterns.

“Retrieval-Augmented Generation systems create a new privacy surface. If retrieval outputs are exposed, sensitive data can leak far beyond the intended user. Private AI has to protect retrieval, not just generation.” — @SecretNetwork

What You Should Never Share With AI Financial Tools

The temptation to treat AI like a trusted financial advisor is understandable, but certain information should remain absolutely off-limits:

- Exact account balances and account numbers

- Social Security numbers or tax identification numbers

- Specific investment positions and portfolio allocations

- Detailed income information tied to your identity

- Family financial dependencies and inheritance details

- Debt amounts with creditor names

- Real estate values and mortgage details

Instead, ask hypothetical questions or use percentage ranges. Replace “I have $50,000 in my 401k” with “someone with around $50,000 in retirement savings.”

The Historical Parallel: Financial Surveillance in Authoritarian Regimes

This AI privacy crisis has chilling historical precedents. In Nazi Germany, detailed financial records helped authorities identify and target Jewish families for asset seizure. The Stasi in East Germany used financial surveillance to control and manipulate citizens. The difference now is scale and sophistication—AI systems can analyze and cross-reference financial behavior patterns across millions of users simultaneously.

The potential for abuse extends beyond government surveillance. Insurance companies, employers, and financial institutions could potentially access or purchase insights derived from AI financial conversations to discriminate against individuals based on their financial vulnerabilities or future plans.

“This is very concerning. Who was able to access this information and how? This basically means that everything everyone is putting into any AI Chatbot will easily be handed over to authorities. You have no more free freedom. Democracy? Bye-bye.” — @AdelleNaz

The Technical Reality: Your Data Lives Forever

Unlike human financial advisors bound by fiduciary duties and regulatory oversight, AI systems operate in a largely unregulated environment. Most major AI platforms retain conversation data for 30 days minimum for abuse monitoring, but the reality is more complex:

- Training data integration: Your conversations may be used to improve AI models

- Third-party sharing: AI companies often share data with partners and service providers

- Government access: Law enforcement can potentially subpoena AI conversation records

- Security breaches: Centralized AI databases present attractive targets for cybercriminals

Building Financial Privacy in the AI Age

The solution isn’t to avoid AI entirely—it’s to approach it strategically. Use AI for general financial education and hypothetical scenario planning, but keep your specific financial details offline. Consider these alternatives:

- Traditional fee-only financial advisors bound by fiduciary standards

- Anonymous financial planning tools that don’t require personal data

- Educational AI conversations using hypothetical scenarios instead of real numbers

- Local financial planning software that doesn’t transmit data to the cloud

“SECURITY FIRST — BUILDING AI FINANCIAL INFRASTRUCTURE THAT LASTS Speed gets attention. But security is what sustains systems at scale.” — @yabarich

The Path Forward: Regulation and Personal Responsibility

The financial AI privacy crisis demands immediate regulatory intervention. We need AI-specific financial privacy laws that establish clear boundaries around data collection, retention, and use. But regulation moves slowly, and the privacy damage is happening now.

The responsibility falls on individual users to protect themselves by understanding the true cost of AI financial advice. The few minutes you save by asking an AI about your retirement planning could result in decades of privacy vulnerability.

Your financial privacy is not a fair trade for convenience. The smartest financial decision you can make in 2026 might be keeping your money questions away from AI entirely.